Your Agent Stack Is Only as Reliable as Its MCP Layer

MCP servers are becoming production dependencies for agent systems. How to inventory ownership, permissions, observability, and failure modes before they become hidden infrastructure risk.

Your Agent Stack Is Only as Reliable as Its MCP Layer

A lot of teams still talk about MCP servers like they are harmless glue code.

That framing is going to age badly.

If your agents touch docs, repos, ticketing systems, databases, browsers, or SaaS tools, MCP servers are not side utilities. They are production dependencies. They sit between the model and the systems that make the model useful. When they fail, drift, slow down, or over-permission, the breakage does not look like a connector issue. It looks like your product got weird.

If you only do one thing this quarter, do this: build an MCP dependency register. List every server, its owner, whether it is local or remote, what systems it exposes, what permissions it holds, how it is monitored, and what fails if it goes down. That exercise will tell you very quickly whether your MCP layer is infrastructure or improvisation.

Why MCP changes the dependency graph

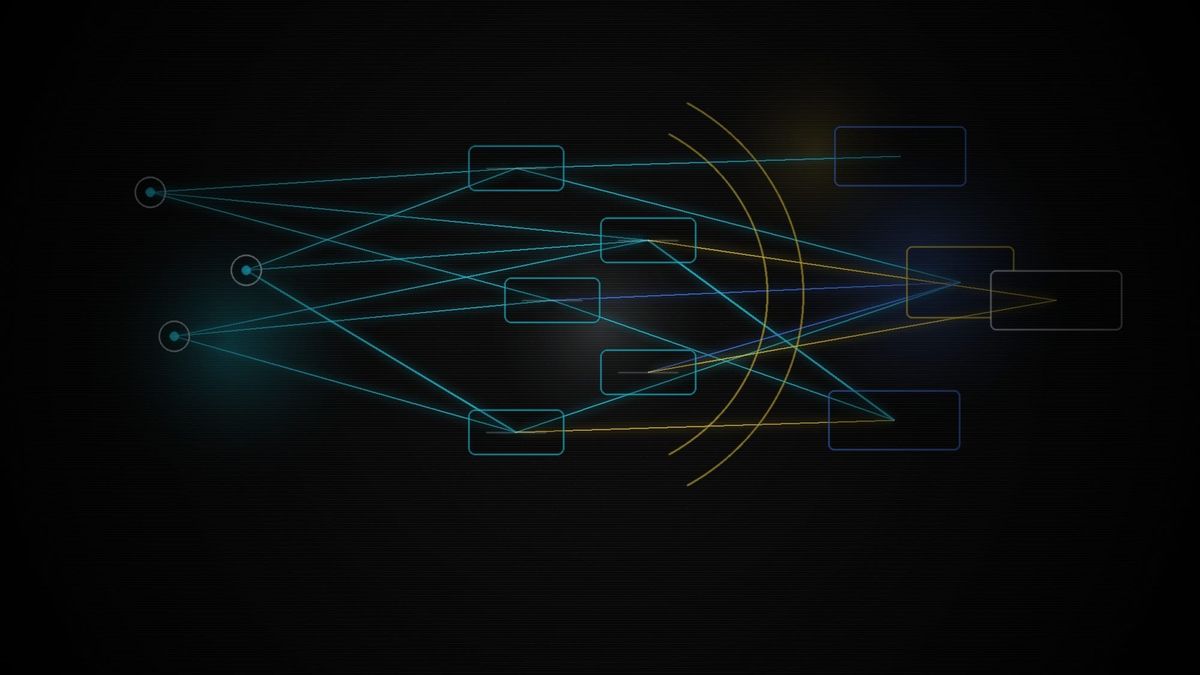

The official Model Context Protocol documentation describes MCP as an open standard for connecting AI applications to external systems. Its specification says MCP follows a client-host-server architecture, with hosts managing multiple client instances, each client maintaining an isolated 1:1 server connection, and servers exposing resources, tools, and prompts while running either locally or as remote services.

That sounds straightforward, but it has a big operational consequence: every time you add an MCP server, you add another live dependency between your agent and the real world.

That changes your failure modes.

The production mistake teams are about to repeat

We have seen this movie before.

First it was direct database access from internal tools. Then microservices. Then SaaS APIs. Each wave started as a speed unlock and ended as an operational discipline problem. MCP is heading the same way.

The MCP architecture is explicitly client-host-server. The official architecture docs define hosts as the AI applications that manage connections and permissions, clients as the protocol-level connectors that establish one stateful session per server, and servers as the services that provide context and capabilities, whether as local processes or remote services. In practice, that means one agent experience can quietly depend on multiple independently owned systems.

That is where teams stop asking the old questions. Who owns this server? How is it deployed? What are its auth boundaries? What is the rollback plan? What happens if the schema changes? Can we trace which tool call touched which external system? If this server goes down, does the agent fail clearly or hallucinate around the missing capability?

Those are production questions, not protocol questions.

What good MCP discipline looks like

Start with ownership.

Every MCP server needs a named owner, not a vague team alias. Someone has to approve changes, review permissions, handle incidents, and decide whether the server is still worth running. If nobody owns it, the agent stack is already depending on abandoned infrastructure.

Then define the trust level.

A local read-only server used by one developer is not the same risk category as a remote shared server that can write into production systems. Treat those differently. The mistake is not using both. The mistake is governing both like they are just handy extensions.

Next, inventory permissions.

Do not stop at the label on the server. Document what underlying systems it can touch, whether it can read, write, delete, or trigger actions, and what identity it uses to do that work. The host may manage permissions at the connection layer, but your operating risk still comes from what the server can actually reach.

Then add observability.

You want to know which server was called, by whom, against what system, and what happened next. When an agent produces a bad outcome, teams need enough traceability to tell the difference between a model mistake, a server failure, and a permissions design problem.

Finally, design for partial failure.

Assume one server will be slow, unavailable, or returning stale data while the rest of the workflow still functions. Good agent products fail clearly, degrade cleanly, and avoid turning a missing MCP dependency into a confident but wrong answer.

Why this matters now

This is no longer just a niche protocol for early adopters. The official MCP Registry is now live in preview at registry.modelcontextprotocol.io, which makes it easier for teams and downstream marketplaces to normalize MCP inside real workflows. It does not reduce the governance work. It increases the odds that teams will add servers faster than they add ownership, permission boundaries, and failure handling.

Once a dependency becomes normal, the teams that win are usually not the ones who adopted it first. They are the ones who learned how to govern it.

What to do this quarter

Run an MCP dependency review.

Keep it simple:

- List every MCP server your agents can access.

- Mark each one local or remote.

- Record owner, connected system, and write scope.

- Identify which workflows break if that server is unavailable.

- Flag the servers that need stronger permission review, logging, or fallback behavior.

Most teams do not need a big platform initiative first. They need a visible list, clear ownership, and a rule that new MCP servers do not enter production as untracked glue.

Builder Tip

Create an MCP dependency register before you add your fifth server.

Quick Hits

The most dangerous MCP server is usually not the most complex one. It is the one everyone assumes is harmless.

If a server can write, not just read, it deserves a stricter review path.

Remote shared MCP servers should be treated more like internal platform services than developer utilities.

Standard protocols reduce integration pain. They do not remove governance work.